From CORBA to Claude

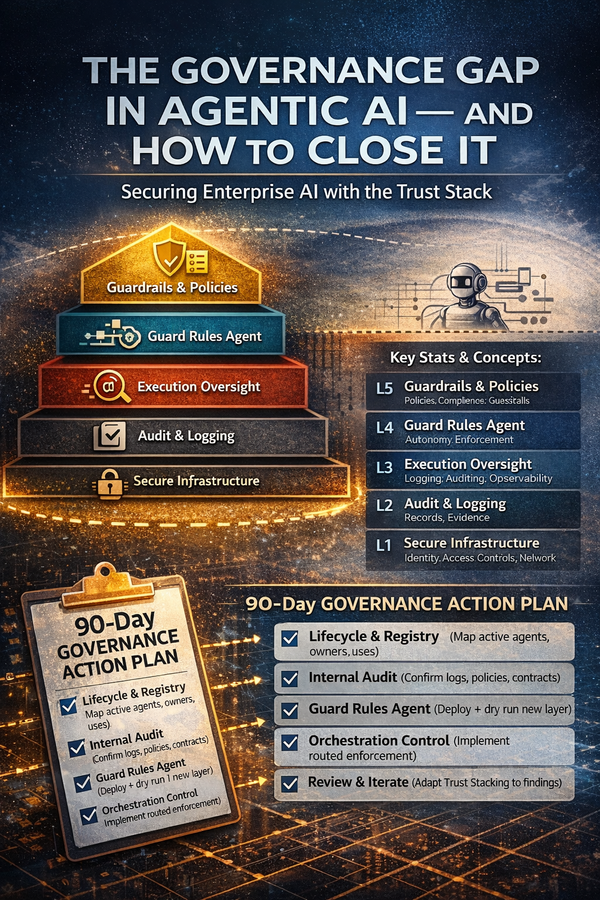

The Long Refactoring of Distributed Intelligence

How we traded the comfort of deterministic contracts for the power of probabilistic reasoning

The Integration Nightmare That Launched a Thousand Standards

In 1997, a major Wall Street trading firm spent fourteen months trying to connect a Tandem mainframe running their risk engine to a Windows NT workstation displaying real-time positions. Both systems worked flawlessly in isolation. Together, they produced a logistical nightmare of marshaling errors, firewall port conflicts, and cryptic DCOM configuration screens that seemed designed to humiliate senior engineers. The project eventually shipped—three deadlines late, two architects short, and with a maintenance burden that would haunt the firm for a decade.

Today, an AI agent can coordinate that same handoff in seconds. A hiring manager's agent tasks a sourcing agent to find candidates; the sourcing agent collaborates with scheduling and background-check agents; results flow back through a cascade of autonomous decisions. No IDL files. No registry entries. No port negotiations. Just natural language descriptions and learned policies.

This isn't magic—it's the culmination of thirty years of painful lessons about what distributed systems actually need. The journey from CORBA to Claude reveals something important: interoperability never went out of style. Only the mechanisms did.

We didn't abandon distributed objects because the idea was wrong. We abandoned them because the world stopped matching their assumptions.

I. The World They Built For

CORBA, COM, and DCOM emerged from a computing reality that no longer exists. Understanding that world isn't just historical curiosity—it explains why these protocols succeeded brilliantly for a decade before becoming anchors dragging enterprises backward.

The Promise of Location Transparency

The Object Management Group founded itself in 1989 with an audacious goal: make heterogeneous machines speak one language. CORBA 1.1, published in 1991, delivered something genuinely revolutionary—location transparency. A client could invoke methods on an object reference without knowing whether that object lived in the same process, on the same machine, or across the Atlantic. The Object Request Broker handled everything: marshaling, transport, activation, even failure recovery.

For telecom companies routing millions of calls through distributed switches, this was transformative. For financial institutions running trading systems across Unix workstations and mainframe backends, it eliminated entire categories of integration code. Douglas C. Schmidt at Vanderbilt built real-time CORBA systems for aerospace applications that would have been unthinkable with proprietary protocols. Michael Stal at Siemens deployed CORBA across European industrial systems where no single vendor's stack could meet requirements.

Microsoft took a different path. COM, introduced in 1993, focused on binary compatibility rather than network transparency. Where CORBA compiled IDL files into language-specific stubs, COM defined vtable-based interfaces—virtual function tables that let components written in different languages link at runtime. When DCOM extended this model across networks in 1996, Windows developers gained distributed computing without leaving the familiar Visual Studio environment.

What These Protocols Assumed

Every successful technology embodies assumptions about its operating environment. CORBA and DCOM assumed stable networks with perimeter firewalls defining trust boundaries. They assumed structured data flowing through well-defined schemas. They assumed interfaces that changed rarely and deployments measured in years rather than hours. Most fundamentally, they assumed deterministic execution: the same inputs always produce the same outputs.

These assumptions weren't naive—they accurately described enterprise computing in the 1990s. LANs were indeed stable. Corporate WANs were indeed managed. Data was indeed mostly structured. And determinism was so obviously desirable that nobody thought to question it.

Then the world changed.

II. Death by a Thousand Paper Cuts

CORBA didn't die in a dramatic collapse. It died slowly, one frustrated engineer at a time, as its elegant architecture collided with operational reality. Michi Henning, who served on the OMG's architecture board before becoming an ORB implementer, documented the autopsy in his landmark ACM analysis "The Rise and Fall of CORBA." His verdict was damning: CORBA failed not despite its sophistication but because of it.

The Versioning Catastrophe

Here's the scenario that broke enterprises: You need to add a field to a data structure. In a web API, you add the field, deploy the new version, and let clients adopt it at their leisure. In CORBA, changing a structure broke the on-the-wire contract between every client and server. You couldn't gradually migrate. You had to replace everything simultaneously—every client, every server, every intermediary—in a single coordinated deployment.

CORBA provided no adequate versioning mechanism. "Versioning by derivation," the recommended approach, proved utterly inadequate for commercial software requiring gradual, backward-compatible upgrades. Enterprises found themselves frozen on ancient interface versions, unable to evolve without massive, coordinated deployments that nobody wanted to schedule. Entire architectures became stuck in time, accumulating technical debt with compound interest.

The Firewall Problem

CORBA's architects never imagined corporate security policies would conflict with their protocol. They assumed that if you controlled the network, you controlled the ports. But as enterprises locked down perimeters, CORBA required opening ports in firewalls for each service—a requirement that security teams rejected categorically. The OMG made several attempts at specifying firewall traversal, but these efforts collapsed due to technical shortcomings and complete lack of interest from firewall vendors.

DCOM faced worse problems. Its dependency on Windows security and RPC infrastructure made cross-platform deployment nearly impossible. Configuration complexity meant objects often needed multiple install/reinstall cycles before they worked. Security vulnerabilities discovered as late as 2021 required Microsoft to implement hardening changes that broke backward compatibility—demonstrating that even Microsoft couldn't maintain DCOM safely.

The Rise of "Good Enough"

In 1999, Microsoft and DevelopMentor published the Simple Object Access Protocol (SOAP). It was slower than CORBA. It was more verbose than DCOM. But it had one killer feature: HTTP traffic through port 80 traversed firewalls without special configuration. Yes, this was arguably naive from a security perspective—tunneling everything through the web port circumvented the very controls firewalls were meant to enforce. But it removed the barrier that was strangling distributed computing adoption.

Roy Fielding's REST, formalized in his 2000 dissertation, pushed even further toward simplicity. Don Box, who'd written the authoritative book on COM and helped create SOAP, later reflected on why Microsoft moved away from binary middleware: developers would accept worse performance for easier deployment every single time. REST won not because it was better—by many technical metrics, it wasn't—but because it matched how the internet actually worked.

III. When the Ground Shifted

Legacy distributed object systems didn't just fail to keep up—they were designed for a world that ceased to exist. The comfortable assumptions of the 1990s crumbled one by one, each collapse revealing new requirements that CORBA and DCOM couldn't address.

From Perimeters to Zero Trust

NIST's SP 800-207, published in 2020, codified what practitioners already knew: perimeter security was dead. Zero trust architecture assumes no implicit trust by location, requires continuous authentication and authorization, and mandates micro-segmentation across hybrid environments. Protocols designed for "trusted networks" behind corporate firewalls became architectural liabilities in a world where the network is always hostile.

The Unstructured Data Explosion

CORBA excelled at structured data interchange—its CDR encoding handled complex type systems with impressive efficiency. But analysts now estimate that 80-90% of enterprise data is unstructured: emails, documents, images, audio, video, sensor streams. The IDL paradigm of pre-defined types cannot accommodate content that doesn't fit neat schemas. When your business runs on customer emails and support tickets and Slack messages, a protocol that requires compiling interface definitions becomes absurd.

The Probabilistic Turn

Here's the shift that changes everything: we're moving from deterministic to probabilistic computation. AlexNet in 2012 and transformers in 2017 catalyzed a fundamental reconception of what computers do. CORBA method calls produced the same result every time given the same inputs—that was the whole point. LLM-based systems are inherently probabilistic. The same prompt may produce different outputs. The same query may return different results.

This isn't a bug to be fixed—it's the source of AI's power. Probabilistic systems can handle ambiguity, generalize to novel situations, and produce creative solutions that deterministic systems never could. But it requires entirely new approaches to testing, monitoring, and reliability that legacy middleware never contemplated.

When machines make 'fuzzy' decisions instead of binary ones, we gain power at the cost of predictability. That trade-off defines the AI era.

IV. The N×M Problem and Its Solution

Before diving into modern protocols, consider the integration challenge that drove their creation. Anthropic described it as the "N×M problem": with 10 AI applications and 100 data sources, you potentially need 1,000 custom integrations. Each new application multiplies the integration burden; each new data source does the same. The combinatorial explosion makes enterprise AI deployment prohibitively expensive.

Figure 1: The Integration Explosion

WITHOUT PROTOCOL STANDARDIZATION

[App 1] ──┬── [Data Source A]

[App 2] ──┼── [Data Source B] ← N × M custom integrations

[App 3] ──┴── [Data Source C]

10 apps × 100 sources = 1,000 integrations

WITH MCP/A2A STANDARDIZATION

[App 1] ──┐ ┌── [Data Source A]

[App 2] ──┼── [PROTOCOL] ──┼── [Data Source B]

[App 3] ──┘ └── [Data Source C]

10 apps + 100 sources = 110 integrations

This is exactly the problem CORBA claimed to solve in 1991. The difference is that AI-native protocols solve it under radically different constraints: internet-scale deployment, unstructured data, probabilistic behavior, and tools that describe themselves in natural language rather than compiled IDL files.

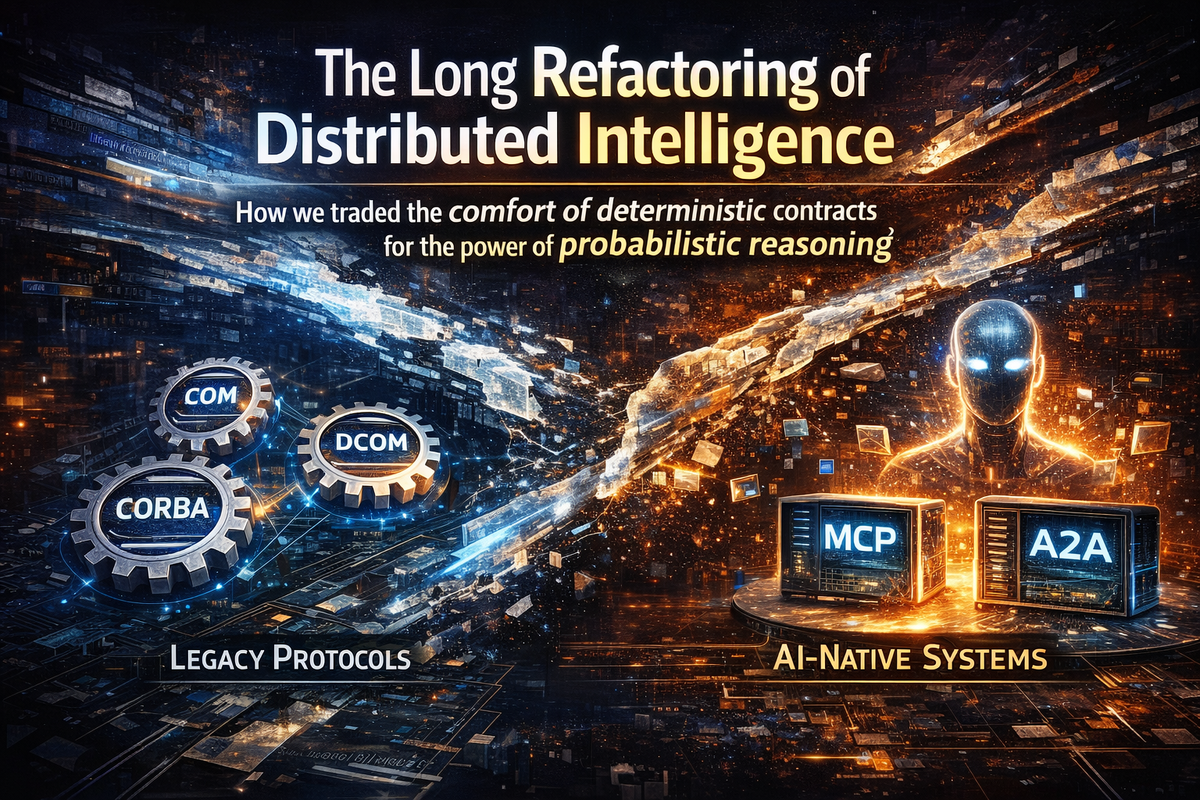

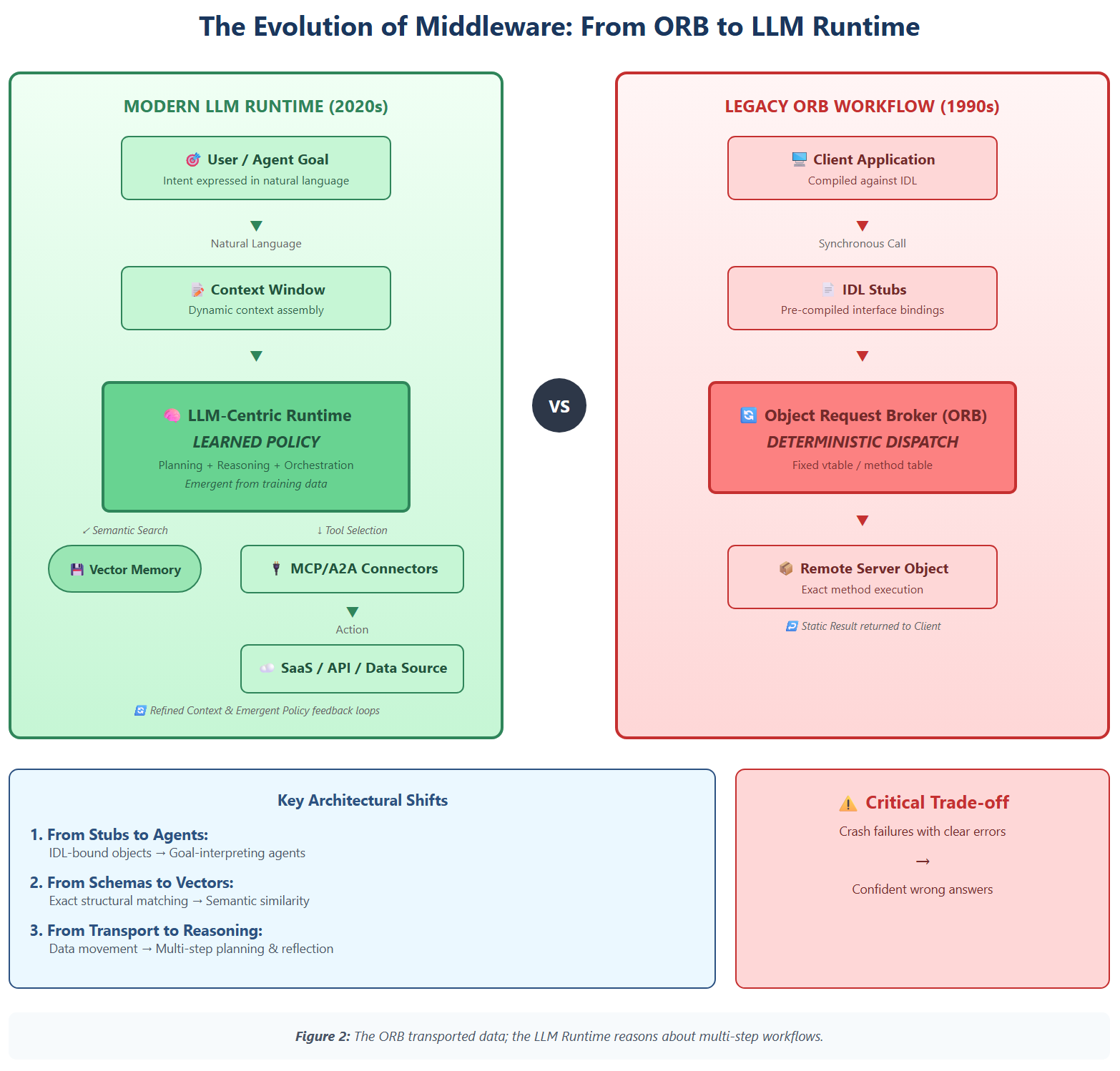

V. The New Middleware: MCP and A2A

Anthropic's Model Context Protocol (MCP), announced in November 2024, and Google's Agent2Agent (A2A), announced in April 2025, represent the distributed computing patterns emerging for the AI era. They echo CORBA's goals—interoperability, coordination, abstraction—but operate with different primitives under probabilistic semantics.

MCP: Connecting AI to Everything

MCP drew explicit inspiration from the Language Server Protocol (LSP) that Microsoft developed around 2016. Just as LSP allows a single language server to provide code intelligence to multiple editors, MCP allows a single data connector to serve multiple AI applications. The protocol uses JSON-RPC 2.0 over HTTP—no proprietary binary formats, no custom port requirements, nothing that triggers firewall alarms.

The adoption numbers are staggering. Within one year of launch, MCP server downloads grew from roughly 100,000 to over 8 million. The ecosystem now includes more than 5,800 MCP servers and 300 clients, with 97 million monthly SDK downloads across Python and TypeScript. In December 2025, Anthropic donated MCP to the Linux Foundation's Agentic AI Foundation, with OpenAI, Google, Microsoft, and Amazon as supporters. When competitors unite around a protocol, pay attention.

A2A: When Agents Talk to Agents

MCP connects agents to tools and data. A2A solves a different problem: how do autonomous agents coordinate with each other? Announced at Google Cloud Next with support from over 50 partners including Atlassian, Salesforce, SAP, and ServiceNow, A2A specifies secure information exchange and action coordination across enterprise platforms.

A2A's key innovation is the Agent Card—a JSON document describing an agent's capabilities that enables discovery without a central registry. Agents find each other, negotiate collaboration, and coordinate workflows dynamically. Version 0.3, released July 2025, added gRPC support and signed security cards. The protocol now counts over 150 organizational supporters and has been donated to the Linux Foundation.

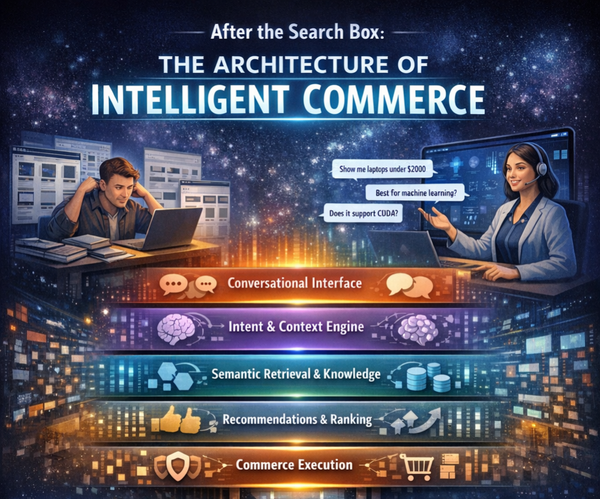

VI. From vtables to Learned Policies

This is where it gets interesting. The core goals of distributed computing haven't changed: interoperability, coordination, abstraction. But the mechanisms have evolved so dramatically that we need a translation guide. Under the hood, the "ORB" is now an LLM-centric runtime plus vector store and tool registry; the language binding is natural language, not IDL; and the dispatch logic is an emergent policy learned from data rather than a hand-coded vtable.

Figure 2: Architecture Translation

|

Component |

Legacy (CORBA/COM) |

AI-Native (MCP/A2A) |

|

Runtime |

Object Request Broker (ORB) |

LLM + Vector Store + Tool

Registry |

|

Language Binding |

IDL-compiled stubs |

Natural language descriptions |

|

Dispatch Logic |

Hand-coded vtable / method table |

Emergent policy learned from

data |

|

Type System |

Rigid IDL types / COM interfaces |

Embeddings as soft semantic

contracts |

|

Discovery |

Naming service / Registry /

CLSID |

Agent Cards + semantic search |

|

Error Handling |

Exceptions with defined

semantics |

Graceful degradation /

hallucination risk |

The vtable-to-policy shift deserves special attention. In COM, a vtable was a fixed array of function pointers—the compiler guaranteed that slot 3 always contained QueryInterface, slot 4 contained AddRef, and so on. The entire system depended on these guarantees. In AI-native systems, there is no vtable. Instead, the language model interprets natural-language descriptions of capabilities and decides—probabilistically—which tool to invoke. The dispatch logic emerges from training rather than compilation.

Embeddings as "Soft Contracts"

CORBA IDL and COM type libraries enforced rigid contracts: if the schema didn't match exactly, the call failed. This was intentional—strong typing caught errors at compile time rather than runtime. But it created brittleness that made evolution painful.

Vector embeddings act as "soft contracts." They encode semantic similarity, so a search for "automobile" finds documents about "cars" without explicit synonym mappings. New concepts integrate incrementally. Queries still retrieve relevant results even as schemas and ontologies evolve. This flexibility enables adaptation that rigid contracts couldn't permit.

But here's the trade-off nobody talks about enough: when a CORBA contract failed, the system crashed with a clear error. When a soft contract fails, the system might give a confident but wrong answer. A semantic mismatch doesn't throw an exception—it returns results that seem plausible but miss the actual intent. This is a fundamentally different failure mode, and we're still learning how to handle it.

Autonomous Orchestration vs. Programmed Calls

In RPC-centric systems, the caller specifies exactly what should happen: call method X with parameters Y, Z. The middleware transports and marshals—nothing more. In agentic architectures, the caller specifies a goal. The orchestrator decomposes that goal into sub-tasks, selects appropriate agents and tools, coordinates their interactions, and adapts when things go wrong.

Consider Google's hiring example: a manager's agent tasks a sourcing agent to find candidates, which collaborates with scheduling and background-check agents, cascading decisions through a network of autonomous actors. In COM/DCOM, you'd program every interaction path explicitly. In A2A, agents discover each other's capabilities through Agent Cards and negotiate collaboration dynamically. The system adapts to situations its programmers never anticipated.

VII. The Trade-offs Nobody Wants to Discuss

The AI industry's enthusiasm for agent protocols sometimes obscures their genuine limitations. These aren't problems to be solved with the next release—they're fundamental trade-offs baked into the probabilistic paradigm.

The Explainability Gap

CORBA method calls were fully traceable. You could examine every parameter, every return value, every intermediate state. Debugging distributed systems was hard, but at least the system did what it said it did. AI agent decisions emerge from opaque reasoning processes. Why did the agent choose Tool A instead of Tool B? The honest answer is often: we're not entirely sure. LangChain's State of AI Agents Report found that explainability remains a critical barrier—and the burden falls on engineering teams without clear solutions.

Security Surfaces We Don't Understand

Security researchers have identified multiple outstanding issues with MCP: prompt injection vulnerabilities, tool permission problems where combining safe-seeming tools can exfiltrate data, and "lookalike tools" that silently replace trusted ones. A2A faces similar challenges around agent authentication and authorization. The attack surface of AI-native systems differs fundamentally from traditional middleware, and we're discovering vulnerabilities faster than we're fixing them.

The Performance Reality

CORBA's CDR encoding was compact and fast—optimized for performance over decades of engineering. LLM-based systems consume significant computational resources and introduce latency that would have been unacceptable in the 1990s. As agent complexity grows, token usage increases, and costs scale accordingly. The flexibility of natural-language interfaces comes at a real price in compute, latency, and operational cost.

Determinism Was a Feature, Not a Bug

For regulated industries—healthcare, finance, aviation—the "same input, same output" guarantee wasn't just convenient. It was required for audit trails, compliance, and liability. Probabilistic systems that may produce different results from identical inputs create genuine governance challenges that current protocols don't address. When the regulator asks "why did the system make this decision?", "the model thought it was a good idea" isn't an acceptable answer.

VIII. Then vs. Now: A Complete Comparison

The following comparison synthesizes three decades of evolution across the dimensions that matter for enterprise architecture:

|

Dimension |

Legacy Protocols |

AI-Native Architectures |

|

Primary Goal |

Distributed objects on

controlled networks |

Autonomous agents across

internet-scale estates |

|

Interface Definition |

IDL schemas, binary vtables |

Natural language, JSON

manifests, embeddings |

|

Invocation Model |

Deterministic RPC dispatch |

Goal-driven task planning and

orchestration |

|

Data Assumptions |

Structured data, well-defined

schemas |

80-90% unstructured, vectorized

retrieval |

|

Network Model |

Perimeter security, trusted

LAN/WAN |

Zero-trust, globally

distributed, multi-tenant |

|

Versioning |

Coordinated rollout, breaking

changes |

Gradual adoption, agents adapt

via prompts |

|

Reasoning |

None—middleware transports only |

Probabilistic planning and error

recovery |

|

State Management |

Stateless RPC or explicit

sessions |

Contextual memory, conversation

history |

|

Discovery |

Naming services, registries |

Agent Cards, semantic search |

|

Security Model |

Firewall-based, Kerberos |

OAuth tokens, per-request policy

evaluation |

|

Failure Mode |

Clear exceptions, defined

semantics |

Graceful degradation, confident

wrong answers |

|

Key Trade-off |

Predictability over flexibility |

Flexibility over predictability |

IX. The Comfort of Control vs. The Power of Autonomy

The journey from CORBA to Claude isn't a story of progress toward an obviously better destination. It's a story of trade-offs, each generation solving problems that the previous generation couldn't handle while creating problems the previous generation never faced.

CORBA's architects gave us location transparency, interface standardization, and a coherent model for distributed objects. They couldn't anticipate internet-scale deployment, unstructured data explosions, or machines that reason probabilistically. Their assumptions matched their world perfectly; it's not their fault the world changed.

MCP and A2A solve the integration problems that strangled AI deployment—the N×M explosion, the firewall traversal nightmares, the schema brittleness that made enterprise adoption prohibitively expensive. But they create new problems: opaque reasoning, novel security vulnerabilities, and the fundamental uncertainty of probabilistic behavior. These protocols will not be the final word any more than CORBA was.

The interesting work ahead is recovering some of CORBA's clarity of contract without sacrificing the flexibility that makes AI-native systems valuable. How do you create "strong" guarantees in a probabilistic world? How do you make autonomous decisions auditable? How do you govern systems that adapt beyond their programmers' intentions?

One pattern repeats across thirty years: the industry converges on standards once proprietary approaches become friction points. OMG brought competitors together for CORBA. The Linux Foundation now hosts both MCP and A2A. When rivals unite around protocols, it signals that integration costs have become unbearable and that the time for standardization has arrived.

The ORB is now an LLM runtime. The IDL is natural language. The vtable is learned policy. Interoperability never went out of style; only the mechanisms did.

As we replace hand-coded vtables with learned policies, are we ready to trade the comfort of total control for the power of autonomous reasoning? The answer will shape enterprise computing for the next thirty years.

References

[1] M. Henning, "The Rise and Fall of CORBA," ACM Queue, vol. 4, no. 5, 2006.

[2] Object Management Group, "CORBA Specification," versions 1.1 (1991), 2.0 (1996), 3.0 (2002).

[3] D. Box, Essential COM. Reading, MA: Addison-Wesley, 1998.

[4] Microsoft Corporation, "Distributed Component Object Model (DCOM) Remote Protocol," MS-DCOM.

[5] R. Fielding, "Architectural Styles and the Design of Network-based Software Architectures," Ph.D. dissertation, UC Irvine, 2000.

[6] NIST, "SP 800-207: Zero Trust Architecture," 2020.

[7] Anthropic, "Introducing the Model Context Protocol," Nov. 2024. [Online]. Available: anthropic.com/news/model-context-protocol

[8] Model Context Protocol Specification, modelcontextprotocol.io/specification/2025-11-25

[9] Google, "Announcing the Agent2Agent Protocol," Google Developers Blog, Apr. 2025.

[10] A2A Protocol Specification, a2a-protocol.org/latest/

[11] Linux Foundation, "Agentic AI Foundation Launch," Dec. 2025.

[12] F. Buschmann et al., Pattern-Oriented Software Architecture. Chichester: Wiley, 1996.

[13] LangChain, "State of AI Agents Report," 2025. [Online]. Available: langchain.com/stateofaiagents

[14] A. Vaswani et al., "Attention Is All You Need," in Proc. NeurIPS, 2017.

[15] ISO/IEC 19500, "Information Technology—Object Management Group—Common Object Request Broker Architecture," 2012